A non technical guide to scaling Magento (or any other website)

Performance & Scaling of a Magento web site are often confused. As a store owner who may not be technical a close analogy with real life will help in talking to your hosting providers and other experts.

It is no coincidence that hits to a website is called as traffic. We take this analogy further, to explain what factors matter to performance and scaling a website.

Website performance is like a car – higher performance cars drive faster and can cover a distance in a shorter time. Similarly, a higher performant website will serve a page fast. This is often measured as page load speed. A critical component of page load that the server is responsible for, is server response time. Like measuring performance of a car, measuring the page load speed is done in test mode with little or no traffic. Sometimes the performance is measured at a random time without looking at other traffic to the site. That is like test driving a car through traffic.

Scaling is like building highways and roads for the cars to move on. Highways are resources – CPU, memory, network that the hits to a website will utilize. The task of a Magento scaling expert is to architect a system – servers and sizes, services to run on each server, connectivity of the servers and access from internet, etc.

Hits to a website is like traffic of random cars on the highway. Each vehicle seems to have a mind of its own, joining and leaving the highway. Each visitor to the website will take their own journey visiting different pages.

Some Observations

Observation 1 : Like a car cannot drive at its highest speed possible at all times due to traffic, a website too cannot perform at its best best all the time. Understanding the factors that make the website perform at its optimal level all the time would be the task of both the developer and the server architect.

Observation 2: Like in traffic we have vehicles of different performance, in a website all URLs do not perform equally. A category page may not perform the same way as product detail page for example.

Observation 3: Better throughput will be achieved with the same resources, if the vehicle performance is improved – some bottlenecks can be avoided if the vehicles moved faster. Similarly, a better performing website is likely to scale better.

Observation 4: Like in traffic in order to scale one has to find the bottleneck in the highway that is causing the current slowdown, fix it and then look for the next bottleneck. This is a change in the hosting infrastructure and architecture, different from the website performance.

Observation 5: A traffic designers job is to ensure maximum number of vehicles can pass the highway at the best speed for each vehicle. A hosting designers job is to ensure maximum traffic is handled in a way that each hit is best served.

What lessons can we learn from traffic management

Lesson 1: To better manage traffic highway system has to be designed that is scalable. Mostly by bottleneck analysis we can derive what needs to be done. For example, is database a bottleneck, is file system access a bottleneck, etc.

Lesson 2: When traffic increases, possibly beyond the capacity of the highway, traffic management has to account for one more variable – starvation. The amount of time a vehicle has to wait at a metered light to enter the highway. The longer the wait, more frustration from the drivers who will find a better route to their destination.

Lesson : On a highway lanes are drawn. A better hosting will make lanes. The way most hosting providers take traffic is analogous to not having lanes with the hope that the maximun throughput will be achieved by letting hits contend for resources. The operating system stands to decide what process gets to use resources.

What are the recommended steps to achieve scaling?

As a first step to server side scaling, we move the database layer out to another instance or server. The main reason is that it is better to allocate resources in a single server when the workload is similar.

In our multi part series we take you through achieving scaling. The series is aimed at a store owner who need not be technical but is ultimately responsible to take a decision on the store. Until now you had to depend on an expert. However, there are no clear answers and the expert is making judgement calls based on most likely their prior experience. As a matter of fact no 2 webstores so results of efforts vary. This series will make you better informed.

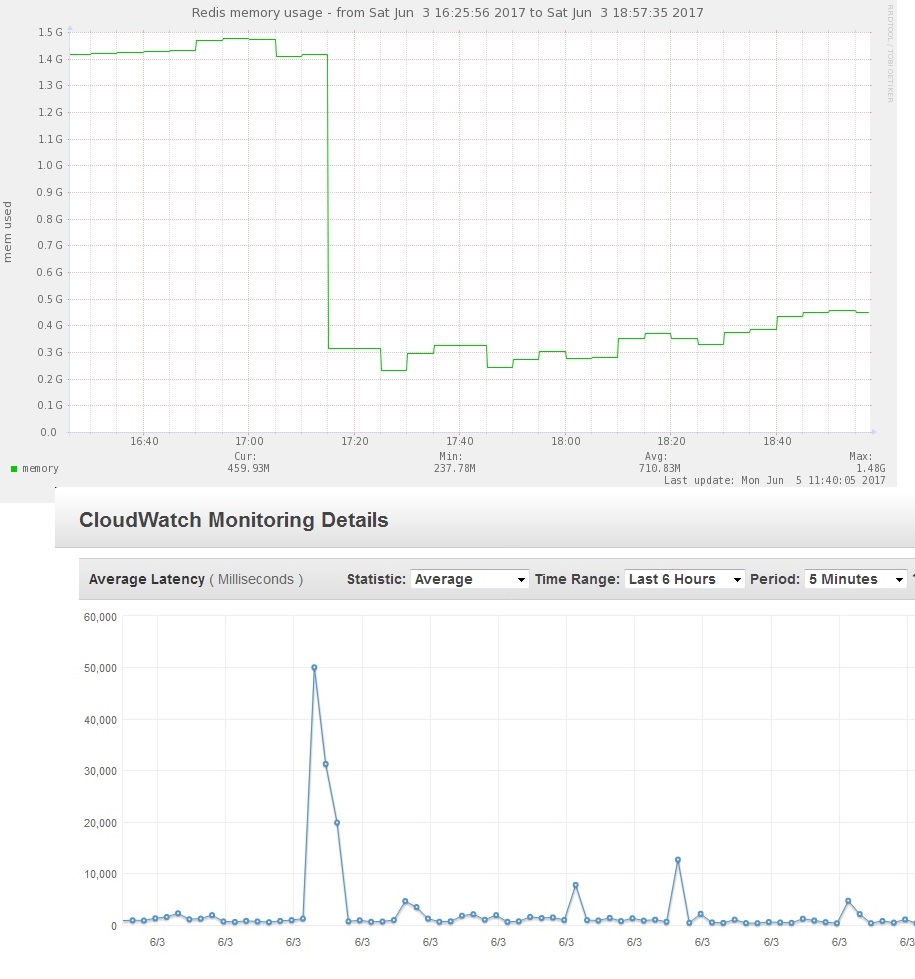

We start by looking at a popular form of scaling – using FPC or Full Page Cache and other types of caches.

In order to help with scale, another important aspect is code quality specifically related to scaling. Scaling is difficult to achieve reliably if there is any externally dependent blocking service executed as part of the hit. Examples include sending email directly to a recipient or a external service, sending information from the server to an external service. All such processing should be done with some form of a queue handled either by an different process such as a cron job. Until Magento 1.9.2.4, the default email sending was inline for example, slowing the order success page being shown.

Autoscaling adds and removes servers (and hence resources) – something traffic managers cannot do with highways. This gives website scaling an advantage to be more elastic.